RL: Why It Matters More Than Ever

A comprehensive guide to Reinforcement Learning — from Q-Learning and DQN to A3C, PPO, and real-world projects. Covers the math, the code, the key papers, and why RL is more important than ever.

RL:Why It Matters More Than Ever

In 2016, the world witnessed a moment that redefined the boundaries of artificial intelligence. A system called AlphaGo, created by DeepMind, faced off against Lee Sedol, one of the greatest Go players in history and won. It wasn’t just another AI victory. It was a turning point. Unlike traditional chess engines that rely on exhaustive search or hand coded rules, AlphaGo taught itself to play through millions of simulated games, refining its strategies over time until it could outthink its creators.

Imagine a Go board filled with black and white stones. Each move AlphaGo makes isn’t based on memorized plays. It’s the result of trial and error, where every victory and defeat becomes data. Over thousands of matches against itself, it began to recognize which patterns led to success forming a kind of machine intuition. What made that victory extraordinary was not that the AI beat a human, but how it learned to do it. AlphaGo’s intelligence wasn’t pre-written; it emerged through interaction and feedback the very essence of Reinforcement Learning (RL).

Reinforcement Learning differs from most of the AI systems people talk about today. In supervised learning, a model learns from labeled examples it’s like having a teacher provide the correct answers to every question. In unsupervised learning, models explore patterns in data without explicit guidance. But in reinforcement learning, there is no teacher. Instead, the model learns by acting taking an action in an environment, observing the result, and adjusting based on the feedback it receives. It is an ongoing loop of experimentation and improvement, the closest computational analogy to how humans and animals learn through experience.

You can visualize this as a circular cycle. The agent (our decision-maker) observes its environment, performs an action, and receives a reward a signal of how good or bad that action was. Based on that reward, it updates its policy, which is its internal playbook for what to do next. The more it interacts, the better its decisions become. The process looks beautifully simple in code

for episode in range(num_episodes): state = env.reset() done = False while not done: action = policy(state) # Agent decides next_state, reward, done, _, _ = env.step(action) # Environment responds update_policy(state, action, reward, next_state) # Learn from feedback state = next_state

Each episode represents a full attempt by the agent to reach a goal. Each iteration within it is a small moment of learning the agent takes an action, sees how the world reacts, and slightly refines its behavior. Over time, this repeated process transforms random behavior into deliberate intelligence.

Picture a robotic arm trying to grasp a cup. The first few attempts are clumsy; it drops the cup again and again. But with each try, it receives feedback: how close it was, whether it succeeded, and what its sensors observed. Slowly, it adjusts refining its movements until, one day, the cup is lifted smoothly. That moment when trial becomes mastery is what makes reinforcement learning extraordinary.

The applications of this idea are everywhere. In robotics, RL allows machines to adapt to the unpredictability of the real world, learning balance, grip, and movement. In autonomous driving, cars use RL to make split-second decisions that keep passengers safe. In research environments like OpenAI Gym and MuJoCo, developers train virtual agents to play Atari games, walk, jump, and even control drone flight. Even in digital spaces, platforms like YouTube and Netflix use reinforcement learning to refine their recommendation systems, constantly adjusting what they show users based on engagement each click or skip becomes a tiny reward or penalty that shapes what comes next.

Here’s a small glimpse of how RL actually looks in one of those simple environments a Q-Learning agent exploring the classic FrozenLake problem from OpenAI Gym:

import gym import numpy as np

env = gym.make(“FrozenLake-v1”, is_slippery=False) Q = np.zeros((env.observation_space.n, env.action_space.n))

alpha = 0.8 # learning rate gamma = 0.9 # discount factor epsilon = 0.1 # exploration probability

for episode in range(1000): state, done = env.reset()[0], False while not done: if np.random.rand() < epsilon: action = env.action_space.sample() # Explore else: action = np.argmax(Q[state]) # Exploit known info

next_state, reward, done, _, _ = env.step(action) Q[state, action] = Q[state, action] + alpha * (reward + gamma * np.max(Q[next_state]) - Q[state, action]) state = next_state

What’s remarkable about this tiny block of code is that it contains no answers, no teacher, and no labels. The agent starts clueless slipping, failing, and retrying. But by the end, it develops its own map of what works and what doesn’t, encoded in the Q table. It has learned by doing.

Reinforcement Learning has since evolved far beyond the Go board. It powers factory robots that can assemble delicate machinery, autonomous drones that can coordinate in flight, and adaptive control systems that manage energy usage in smart cities. It even influences how conversational models like ChatGPT are aligned with human values, through a process called Reinforcement Learning from Human Feedback (RLHF) where models are refined not just for accuracy, but for helpfulness and coherence.

In essence, RL represents a shift in the very definition of machine intelligence from systems that memorize information to systems that learn through interaction. It’s no longer about teaching machines what to do; it’s about giving them the ability to discover what works. The beauty of RL lies in this simplicity a loop of curiosity, feedback, and growth the same loop that drives evolution, learning, and intelligence itself.

Where Reinforcement Learning Fits in AI

If AlphaGo showed what reinforcement learning could achieve, understanding where it sits in the broader world of AI reveals why it’s so fundamentally different. Machine learning, as a whole, is about finding patterns that help systems make decisions. But not all learning is created equal. There are three main branches that define how machines acquire knowledge and reinforcement learning stands apart from the rest.

In supervised learning, we act as the teachers. The model learns by studying pairs of inputs and outputs labeled examples that show it exactly what the right answer looks like. Think of it as a student learning with an answer key. The process is powerful but limited: the model can only generalize from what it’s shown. It doesn’t explore, and it doesn’t invent; it just learns to mimic patterns that already exist.

Unsupervised learning is more like giving that same student a stack of data with no answers and asking them to find structure in it. These systems search for clusters, relationships, or anomalies hidden within data. They can identify customer segments, compress information, or uncover hidden trends but they don’t act on what they learn. They’re observers, not participants.

Reinforcement learning, however, changes everything. There is no teacher, no labeled data, no static dataset. Instead, there is experience.

The model or agent exists within an environment, and every choice it makes affects what happens next. It takes an action, observes the outcome, and receives a reward a signal of success or failure. That feedback updates its internal decision strategy, known as a policy. Each round of interaction improves the policy, making future decisions slightly better.

This process is not just statistical learning it’s behavioral learning.

Imagine a cycle spinning endlessly:Agent → Action → Environment → Reward → Policy Update.Each pass through the loop sharpens the agent’s understanding of the world around it. At first, it acts blindly, like a baby exploring through touch. But with every reward, every correction, its behavior becomes more intentional. Over time, randomness gives way to intelligence.

This dialogue between the agent and the environment can be expressed in just a few lines of Python simple, but powerful enough to capture the entire philosophy of reinforcement learning.

for episode in range(num_episodes): state = env.reset() done = False

while not done: action = policy(state) # Agent decides next_state, reward, done, _, _ = env.step(action) # Environment responds update_policy(state, action, reward, next_state) # Learn from feedback state = next_state

What happens here is almost poetic. Each loop represents an episode a lifetime for the agent. It begins in an unknown state, makes decisions, collects experiences, and finally learns something about how to survive or succeed. Then it starts again, armed with slightly more wisdom. Repeat this process a thousand times, and you’ve captured the very mechanism that allows both animals and algorithms to adapt to their environments. To make this idea more tangible, imagine you’re training a puppy. The first time you say “sit,” the puppy has no clue what you mean. It might bark, jump, or wander off.

You try again, gently pressing it down, and when it finally sits, you reward it with a treat. That moment the connection between the action and the reward imprints a memory. The puppy realizes: sit = treat. After enough repetitions, it begins to sit automatically when it hears the word, anticipating the reward.

Now replace the puppy with an AI agent and the treat with a numerical signal. When the agent performs a desirable action, the environment gives it a positive reward. When it fails, it receives nothing or worse, a penalty. This simple system of incentives and consequences creates complex, intelligent behavior.

That’s what makes reinforcement learning so profound. Unlike supervised models that only interpret data or unsupervised models that only analyze it, reinforcement learning models live inside their data. They experiment, fail, adapt, and try again. Every mistake becomes part of their evolution.

At its core, RL sits at the intersection of control, decision-making, and curiosity. It bridges the gap between static pattern recognition and dynamic intelligence. And as you’ll see throughout this series, the same principles that helped AlphaGo master Go are the ones helping robots walk, drones navigate, and large language models like ChatGPT align their behavior with human values. Reinforcement learning isn’t just a branch of AI it’s the heartbeat of machines that learn to act.

What This Blog Will Give You

This blog isn’t about memorizing formulas or decoding walls of math it’s about understanding intelligence in motion.You’ll explore Reinforcement Learning (RL) not as an abstract concept, but as something alive a process you can see, tweak, and feel evolve right before your eyes.You’ll watch how curiosity becomes competence, how chaos turns into control, and how a few lines of Python can teach a machine to think, act, and adapt. Each post in this series will take you on a journey from intuition to implementation, from theory to experiment.

You’ll learn how algorithms like Q-Learning, Deep Q-Networks (DQN), and Proximal Policy Optimization (PPO)make decisions, improve through experience, and generalize their knowledge to entirely new challenges.But this won’t be a blog where you just read and nod.You’ll build, train, and observe coding agents that live and learn inside interactive environments like OpenAI Gym.

Imagine OpenAI Gym not as a simulation library, but as a vast digital ocean a world where agents learn to survive, explore, and adapt.Your agent is a small explorer drifting in these virtual waters.At first, it moves without purpose, colliding with invisible walls, tossed around by uncertainty.But with each episode, it begins to sense the currents the cause and effect of its own actions.Every misstep sends a ripple of feedback, every success adds a faint pattern to its memory.Slowly, these fragments of experience start forming something remarkable: a strategy.

You can imagine watching a graph rise beside it the reward curve, trembling at first with noise, then slowly stabilizing as the agent learns.That curve is the pulse of learning itself the mathematical heartbeat of curiosity transforming into competence.No single moment marks the breakthrough; instead, it’s a thousand small corrections that, together, form intelligence.

Let’s see that process in its simplest form Q-Learning.This is the foundation, the “hello world” of reinforcement learning.It captures the essence of the feedback loop: act, observe, and improve.In this small example, the agent must navigate the FrozenLake environment a slippery, treacherous world where one wrong step leads to failure.

import gym import numpy as np

Create the environment

env = gym.make(“FrozenLake-v1”, is_slippery=False)

Initialize the Q-table (state x action)

Q = np.zeros((env.observation_space.n, env.action_space.n))

Define hyperparameters

alpha = 0.8 # Learning rate gamma = 0.9 # Discount factor epsilon = 0.1 # Exploration rate

Training loop

for episode in range(1000): state, done = env.reset()[0], False while not done:

Epsilon-greedy strategy: explore or exploit

if np.random.rand() < epsilon: action = env.action_space.sample() # Explore the unknown else: action = np.argmax(Q[state]) # Use what we’ve learned

Step into the environment

next_state, reward, done, _, _ = env.step(action)

Bellman equation: the heart of learning

Q[state, action] += alpha * (reward + gamma * np.max(Q[next_state]) - Q[state, action])

Move to the next state

state = next_state

At the start, the agent knows nothing.It slips, falls, and fails over and over.Every episode is a new attempt, and every mistake leaves a trace in the Q-table, a memory of what not to do.The code above, though simple, embodies something profound: a model that improves itself without any explicit teacher.All it has are consequences rewards and penalties and through them, it constructs its own understanding of the world.

Now imagine this as a small boat in your ocean-themed OpenAI Gym world.In the first few trials, it drifts aimlessly, caught in the unpredictable tides of chance.But as time passes, the boat learns to read the sea turning at the right time, avoiding hidden rocks, and steering steadily toward its destination.No one is guiding it; there’s no map or compass.Its progress is entirely earned through experience.

That’s the quiet magic of reinforcement learning there are no shortcuts, no answers to memorize.Just an agent, an environment, and the unending rhythm of trial and error.Each episode, no matter how chaotic, brings it a little closer to mastery.

And while this story begins with a simple grid of ice, the same process plays out in games and worlds of every scale.In Atari classics like Pong and Breakout, RL agents learn reflexes sharper than any human player.In AlphaZero, they conquer Chess and Go from scratch not by mimicking humans, but by discovering entirely new strategies.In Dota 2 and StarCraft II, they learn cooperation, prediction, and resource management through billions of simulations, each one a small step forward in understanding.

These aren’t just game agents they’re digital explorers, proving the power of experience-based learning.The ocean never changes only the swimmers do.

The same principles that let an agent master a video game also enable robots to balance, drones to stabilize, and autonomous cars to navigate the real world.Reinforcement learning doesn’t memorize the rules of life it learns them by living inside the world’s feedback loop.

Through this series, you won’t just read about RL you’ll experience it.You’ll build your own learning systems, train them from scratch, and watch intelligence take shape in front of you.You’ll start with randomness, observe the rise of structure, and feel that subtle thrill when your first agent learns truly learns to succeed.

By the end, you won’t just know reinforcement learning.You’ll have witnessed it.You’ll have seen chaos transform into purpose not through code you wrote, but through the behavior your code inspired.And as your first agent reaches its goal whether on the frozen lake or in the deep blue of OpenAI Gym you’ll realize what makes this field so endlessly fascinating:it’s not about teaching a machine what to do…it’s about watching it figure it out.

# Roadmap for This Series

Now that you’ve seen what reinforcement learning feels like the trial, the feedback, the spark of learning it’s time to look ahead. This isn’t a one-off post. It’s a guided journey that takes you from the foundations of how machines learn to the point where you’ll be building and training your own intelligent agents. Each part builds on the last, carrying you step-by-step from theory to practice from the spark of an idea to working systems that actually learn and adapt.

We’ll begin by revisiting the popular papers that shaped the world of RL, from AlphaGo and Deep Q-Networks (DQN)to Proximal Policy Optimization (PPO) and Soft Actor-Critic (SAC) the research breakthroughs that turned experimentation into revolution. From there, we’ll dive into the math and intuition behind the magic: the Bellman equations, value functions, and policy gradients that explain why reinforcement learning works at its core.

Then, we’ll get our hands dirty with code implementations. You’ll open up Python, explore OpenAI Gymenvironments, and experiment with frameworks like PyTorch. You’ll see how each line of code maps directly to the ideas you’ve just learned translating equations into actions, and actions into behavior.

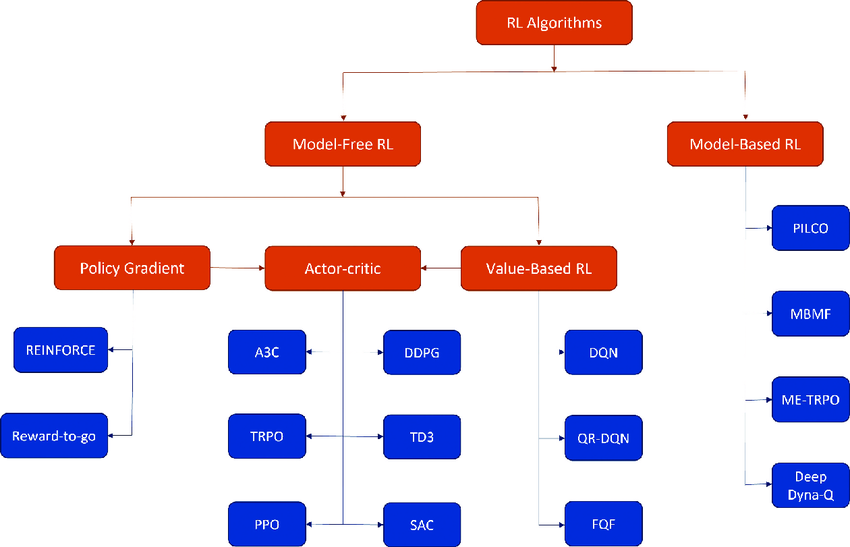

Once the fundamentals are clear, we’ll step into interactive playgrounds where learning becomes visual and alive. You’ll tune rewards, test strategies, and watch your agents evolve in real time from clumsy beginners to capable problem-solvers. After that, we’ll explore the architectures that define modern RL, comparing methods like DQN, A3C, PPO, and SAC, and understanding when and why to use each one.

Finally, we’ll close with projects you can build from simple games to real-world control tasks. You’ll train agents to balance poles, navigate mazes, and even make decisions in more complex environments that mimic reality. By the end, you’ll have both the theory and the intuition and you’ll know what it feels like to teach a machine how to learn.

Imagine a timeline stretching from left to right starting with theory, flowing through intuition and code, and ending in creation. Each post is a milestone, a point where the abstract becomes real.

Throughout this journey, the tone will stay conversational, confident, and slightly cinematic like a guided documentary through the mind of an AI. We’ll move quickly where excitement builds, slow down where understanding deepens, and close each chapter with energy and momentum. This series is made for AI enthusiasts, developers, and researchers who want to see not just how reinforcement learning works, but how it feels to build it from the ground up.

So, ready? Let’s teach machines how to learn.

In the next Section , we’ll dive into the papers and discoveries that defined reinforcement learning tracing the evolution from AlphaGo to DeepMind’s DQN, and beyond.

# Popular RL Papers Q-Learning (Watkins, 1989): The Birth of Model-Free Control

Q-Learning (Watkins, 1989): The First Practical Model-Free Control Algorithm

In 1989, Christopher J. C. H. Watkins introduced a breakthrough in reinforcement learning: Q-Learning. It was the first practical algorithm that could learn optimal behavior purely from experience, without knowing how the world works underneath. This work, later formalized in Watkins & Dayan (1992), became the foundation of modern RL—from Deep Q-Networks (DQN) to AlphaGo.

The Problem: Learning to Act Without a Model

Reinforcement learning studies how an agent interacts with an environment to maximize cumulative reward. At each step the agent observes a state $s_t$, takes an action $a_t$, receives a reward $r_{t+1}$, and moves to a new state $s_{t+1}$. The challenge is to learn which actions are best even when the dynamics are unknown. Earlier methods depended on transition models $P(s’ \mid s, a)$ or Monte Carlo policy evaluation. Watkins proposed learning value estimates directly from experience—no model needed.

The Core Idea: Action-Value Learning

The optimal action-value function $Q^*(s,a)$ satisfies the Bellman optimality equation:

\[Q^*(s,a) = \mathbb{E}\!\left[\, r_{t+1} + \gamma \max_{a'} Q^*(s_{t+1}, a') \,\mid\, s_t=s,\, a_t=a \right].\]If $Q^*(s,a)$ were known, the optimal policy is simply

\[\pi^*(s) = \arg\max_{a} Q^*(s,a).\]Since $Q^*$ is unknown, Q-Learning approximates it from experience.

The Q-Learning Update Rule

Given a transition $(s_t,a_t,r_{t+1},s_{t+1})$, the update is:

\[Q(s_t,a_t) \leftarrow Q(s_t,a_t) + \alpha \big[ r_{t+1} + \gamma \max_{a'} Q(s_{t+1},a') - Q(s_t,a_t) \big].\]Here $\alpha$ is the learning rate and $\gamma$ the discount factor. The term $\max_{a’}Q(s_{t+1},a’)$ bootstraps from the next state. Q-Learning is off-policy: it learns the greedy target while following an exploratory policy such as $\varepsilon$-greedy (choose a random action with probability $\varepsilon$, otherwise the greedy one).

Convergence: Theory Behind the Magic

In tabular settings, if every state–action pair is visited infinitely often and learning rates $\alpha_t$ satisfy the Robbins–Monro conditions $\sum_t\alpha_t=\infty$ and $\sum_t\alpha_t^2<\infty$, then $Q(s,a)$ converges to $Q^*(s,a)$ with probability 1. This proved that optimal control can emerge purely from experience without knowing transition probabilities in advance.

Why It Changed Everything

Q-Learning removed the need for a model and enabled trial-and-error learning in unknown environments. It extended naturally to function approximators (neural nets, trees, etc.), inspiring Double Q-Learning, Dueling Networks, and Deep Q-Networks (DQN). DeepMind’s 2015 paper Human-level control through deep reinforcement learning built directly on this idea, combining Q-Learning with deep CNNs to achieve human-level Atari performance.

Citations

Watkins, C. J. C. H. (1989). Learning from Delayed Rewards, PhD Thesis, King’s College Cambridge.

Watkins & Dayan (1992). Q-learning. Machine Learning 8: 279–292.

Mnih et al. (2015). Human-level control through deep reinforcement learning. Nature 518: 529–533.

Practical Implementation: Python Snippet

Below is a simple tabular Q-Learning implementation suitable for a discrete OpenAI Gym/Gymnasium environment like FrozenLake or Taxi-v3.

import numpy as np

import gymnasium as gym

import random

def epsilon_greedy(Q, state, n_actions, epsilon):

if random.random() < epsilon:

return random.randint(0, n_actions - 1)

return int(np.argmax(Q[state]))

def q_learning(env, episodes=2000, alpha=0.1, gamma=0.99,

epsilon=1.0, epsilon_decay=0.995, epsilon_min=0.05):

n_actions = env.action_space.n

n_states = env.observation_space.n

Q = np.zeros((n_states, n_actions))

for ep in range(episodes):

state, _ = env.reset()

done = False

while not done:

action = epsilon_greedy(Q, state, n_actions, epsilon)

next_state, reward, terminated, truncated, _ = env.step(action)

done = terminated or truncated

# Q-learning update (Watkins, 1989)

best_next = np.max(Q[next_state])

td_target = reward + gamma * best_next

td_error = td_target - Q[state, action]

Q[state, action] += alpha * td_error

state = next_state

epsilon = max(epsilon_min, epsilon * epsilon_decay)

return Q

# Example run

env = gym.make("FrozenLake-v1", is_slippery=False)

Q = q_learning(env)

print("Learned Q-table:")

print(Q)

Key takeaway: Each line of the update rule is a direct numerical realization of Watkins’ Bellman equation.

Visualizing the Bellman Equation

Think of it as a self-consistent equation for the universe of optimal decisions:

┌───────────────────────────────┐

│ Current state–action: (s, a) │

└───────────────────────────────┘

│

▼

Take action → Receive reward r

│

▼

Next state s' → Evaluate best future value max_a' Q(s', a')

│

▼

Combine → r + γ * max_a' Q(s', a')

│

▼

Update Q(s,a) ← Old Q(s,a) + α * (Target − Old)

This bootstrapping is what allows an agent to learn long-term outcomes from immediate experiences.

Limitations and Later Improvements

Although elegant, Watkins’ formulation had some practical challenges:

| Limitation | Solution |

|---|---|

| Over-estimation bias from max operator | Double Q-Learning |

| Slow convergence in large spaces | Function approximation (Deep Q-Networks) |

| Poor exploration | Entropy regularization / ε decay / Soft-Q |

| Instability with neural networks | Experience Replay + Target Networks (DQN) |

These refinements show how Q-Learning served as the seed for an entire field of algorithms.

Watkins’ 1989 thesis “Learning from Delayed Rewards” was a quiet revolution.It formalized the dream of learning purely from interaction without explicit teaching or supervision.Every RL agent today, from game-playing AIs to autonomous vehicles, still whispers the same update rule at its core.

Deep Q-Networks (DQN, DeepMind 2015): Bridging Perception and Control

In 2015, Volodymyr Mnih et al. at DeepMind achieved one of the most defining milestones in AI: they scaled Watkins’ Q-Learning into the high-dimensional world of raw perception using deep convolutional neural networks (CNNs). This system known as the Deep Q-Network (DQN) learned to play 49 Atari 2600 games directly from pixels, sometimes surpassing human performance.It marked the first time a single algorithm could see, act, and improve without any game-specific engineering.

Historical Context: Why the Atari Breakthrough Mattered

Before 2015, RL was largely confined to simple, low-dimensional domains — mazes, grids, or robotic joints where the state space could be enumerated.But perception-based environments (images, video) posed a fatal scaling problem: tabular Q-Learning explodes combinatorially with each new pixel dimension.

DeepMind’s DQN changed that by introducing representation learning.The network didn’t just store Q-values — it understood spatial and temporal regularities from pixels.

From Tabular to Deep Q-Learning

a) Classical Q-Learning recap

\[Q(s,a) \leftarrow Q(s,a) + \alpha \Big[r + \gamma \max_{a'} Q(s',a') - Q(s,a)\Big]\]This update iteratively drives $Q$ toward the Bellman optimality fixed point. In tabular settings, every $(s,a)$ pair has its own entry.

b) DQN’s key idea — function approximation

Replace the table with a neural network $Q(s,a;\,\theta)$ and train by minimizing a squared temporal-difference loss with a target network:

\[L(\theta) \;=\; \Big(r + \gamma \max_{a'} Q(s',a';\,\theta^-) \;-\; Q(s,a;\,\theta)\Big)^2\]Here $\theta$ are the parameters of the online network, and $\theta^-$ those of the target network. Gradients of $L$ backpropagate through the convolutional layers, enabling the agent to generalize across similar states.

Stabilization Mechanisms — The Hidden Genius

Naively combining Q-Learning with neural nets quickly diverges.DeepMind introduced two elegant yet powerful fixes:

| Technique | Problem Solved | Mechanism |

|---|---|---|

| Experience Replay | Temporal correlation between samples | Store past transitions (s,a,r,s’) → sample random mini-batches to approximate i.i.d. data |

| Target Network | Moving targets destabilize gradient updates | Freeze a copy Q(s,a;θ⁻) for N steps → periodically sync with online network |

Together, these created a stable, data-efficient RL pipeline that finally made deep RL feasible.

Architecture and Training Loop

The DQN used a three-layer CNN to process stacked frames (84 × 84 pixels × 4).Outputs were Q-values for each discrete action (typically 18 Atari controls).

Simplified Deep Q-Learning loop

q_net = CNN_QNetwork()

target_net = copy.deepcopy(q_net)

replay = deque(maxlen=100000)

epsilon = 1.0

for step in range(total_steps):

a = env.action_space.sample() if random.random() < epsilon else np.argmax(q_net(s))

s2, r, done, _ = env.step(a)

replay.append((s, a, r, s2, done))

# Sample random batch

batch = random.sample(replay, 32)

targets = []

for s_b, a_b, r_b, s2_b, d_b in batch:

y = r_b if d_b else r_b + gamma * np.max(target_net(s2_b))

q_vals = q_net(s_b)

q_vals[a_b] = y

targets.append(q_vals)

q_net.train_on_batch(batch_states, targets)

if step % 10000 == 0:

target_net.load_state_dict(q_net.state_dict())

Training ran for 50 million frames per game, updating every four steps with γ = 0.99 and RMSProp optimizer.Exploration ε decayed from 1.0 → 0.1 over 1 M frames.

Emergent Behavior — Breakout and Beyond

Initially, the agent flails randomly; after ~200 k steps it learns to hit the ball; by 1 M steps it discovers tunneling carving a hole through bricks to trap the ball above the wall for infinite reward.

Such emergent behavior illustrated that reward driven learning could produce human-like ingenuity.

Results and Impact

Across 49 Atari titles, DQN achieved a median human-normalized score of 121% beating humans in many games like Breakout, Enduro, and Seaquest.Performance was consistent without any hyperparameter tuning per game.

This was the first demonstration that a general RL agent could learn complex tasks directly from raw sensory input.

Beyond DQN — Its Legacy

DQN’s success sparked a wave of descendants that remain core to modern AI systems:

| Successor | Core Idea | Year |

|---|---|---|

| Double DQN | Decouples action selection and evaluation to reduce over-estimation bias | 2016 |

| Dueling DQN | Separates state value and advantage streams for better generalization | 2016 |

| Prioritized Replay | Samples high-TD-error experiences more often | 2016 |

| Rainbow DQN | Combines six improvements (Double, Dueling, NoisyNets, etc.) | 2017 |

| AlphaGo / AlphaZero / MuZero | Integrate Q-value estimation into Monte Carlo Tree Search | — |

These models now power applications in robotics, game AI, finance, and autonomous control.

Conceptual Summary

Policy Gradient Methods (Williams, 1992; REINFORCE)

What Policy Gradients Optimize (High-Level)

Policy Gradient (PG) methods optimize the expected return directly by adjusting the parameters of a stochastic policy $\pi_\theta(a \mid s)$. Using the likelihood-ratio trick, the vanilla REINFORCE gradient is:

\[\nabla_\theta J(\theta) = \mathbb{E}_{\pi_\theta}\!\left[\nabla_\theta \log \pi_\theta(a_t\mid s_t) \, R_t \right].\]This increases the probability of actions that produced high returns — effectively a form of reward-weighted imitation. The key conceptual win over Q-learning–style methods is that policy gradients naturally support continuous actions and differentiable policies.

(www-anw.cs.umass.edu) Why PG Methods Matter (Context & Impact)

Before PG, many RL successes were value-based and mainly discrete. PG unlocked robotics/locomotion and modern actor-critic families (A2C/A3C, TRPO, PPO, SAC) by optimizing distributions over actions rather than action values. (spinningup.openai.com)

Intuition + Variance Reduction

REINFORCE updates are simple but high-variance (episode-level credit assignment). Subtracting a baseline $b(s)$ leaves the expectation unbiased yet reduces variance:

\[\nabla_\theta J(\theta) = \mathbb{E}_{\pi_\theta}\!\left[\nabla_\theta \log \pi_\theta(a_t\mid s_t)\,\big(R_t - b(s_t)\big)\right].\]Choosing $b(s) = V^\pi(s)$ yields the advantage function $A^\pi(s,a) = Q^\pi(s,a) - V^\pi(s)$ — a cornerstone of modern policy gradient methods such as Generalized Advantage Estimation (GAE) in PPO and TRPO.

(papers.neurips.cc) Takeaway: Use advantage estimates (not raw returns) to reduce variance and stabilize learning.

Derivation (Sketch)

Objective

\[J(\theta) = \mathbb{E}_{\tau \sim \pi_\theta}\!\left[\sum_t \gamma^t r_t\right].\]Apply log-derivative trick

\[\nabla_\theta p_\theta(\tau) = p_\theta(\tau)\sum_t \nabla_\theta \log \pi_\theta(a_t\mid s_t).\]Exchange gradient and expectation to obtain REINFORCE

This leads directly to the policy gradient estimator underlying REINFORCE.

Use causality

At time $t$, only future rewards contribute to the gradient—past rewards are irrelevant (“don’t let the past distract you”).

Subtract a baseline

Subtracting any baseline independent of $a_t$ yields an unbiased but lower-variance estimator. The advantage function then emerges naturally as a centered return signal.

(spinningup.openai.com) Practical Tricks (That Actually Help)

Normalize returns and advantages per batch

Standardizing advantages within each batch helps stabilize learning and prevents excessively large gradients.

Entropy bonus

| Add an entropy regularization term $\beta \,\mathcal{H}(\pi_\theta(\cdot | s))$ to encourage exploration and avoid premature convergence to deterministic policies. |

Clipping and learning rate scheduling

Use gradient clipping and learning rate warmups to control instability and smooth early training dynamics.

Longer batches

Collecting more trajectories per update reduces variance of gradient estimates and improves stability at the cost of slower updates.

Logging and monitoring

Track moving averages of episodic return and key loss components (policy loss, entropy loss, value loss). Logging helps diagnose instability and detect performance regressions.

(Proceedings of Machine Learning Research) If you prefer a learning-curve image, generate it from the code below (average episodic return vs. episodes) and embed it as Figure 3.5.

Pseudocode (REINFORCE with Baseline)

Initialize θ; optional value net Vψ(s)

repeat for each iteration:

D ← ∅

for k in 1..num_episodes_per_batch:

τ ← rollout πθ in env → {s_t, a_t, r_t}

D ← D ∪ τ

For each τ in D: compute discounted returns G_t

If using baseline:

Ā_t ← G_t − Vψ(s_t) # advantage estimate

Update ψ by minimizing (Vψ(s_t) − G_t)^2

else:

Ā_t ← (G_t − mean(G)) / std(G)

Update θ by ascending ∑_t log πθ(a_t\mid s_t) · Ā_t + β·H[πθ(·\mid s_t)]

until solved

PyTorch Snippet — Discrete Actions (CartPole)

# REINFORCE on CartPole-v1 (discrete) — minimal, clear

import gym, torch, torch.nn as nn, torch.optim as optim

class Policy(nn.Module):

def __init__(self, obs_dim=4, act_dim=2):

super().__init__()

self.net = nn.Sequential(

nn.Linear(obs_dim, 128), nn.ReLU(),

nn.Linear(128, act_dim), nn.Softmax(dim=-1)

)

def forward(self, x): return self.net(x)

env = gym.make("CartPole-v1")

pi = Policy(); opt = optim.Adam(pi.parameters(), lr=1e-2)

gamma, entropy_coef = 0.99, 0.01

for ep in range(600):

s, _ = env.reset()

logps, rews, ents = [], [], []

done = False

while not done:

s_t = torch.tensor(s, dtype=torch.float32)

probs = pi(s_t); dist = torch.distributions.Categorical(probs)

a = dist.sample()

s, r, done, _, _ = env.step(a.item())

logps.append(dist.log_prob(a))

ents.append(dist.entropy())

rews.append(r)

# discounted returns

G, R = 0.0, []

for r in reversed(rews):

G = r + gamma * G

R.insert(0, G)

R = torch.tensor(R, dtype=torch.float32)

R = (R - R.mean()) / (R.std() + 1e-8) # baseline via normalization

loss = -(torch.stack(logps) * R).sum() - entropy_coef * torch.stack(ents).sum()

opt.zero_grad(); loss.backward(); opt.step()

if ep % 50 == 0:

print(f"Episode {ep} \mid loss {loss.item():.3f} \mid return {sum(rews):.1f}")

Why this works: REINFORCE uses Monte-Carlo returns and a (normalized) baseline; an entropy bonusprevents premature collapse.

PyTorch Snippet — Continuous Actions (Pendulum or MountainCarContinuous)

This shows a Gaussian policy with learnable mean and log-std. For simplicity, we clamp actions to env bounds (tanh-squash with proper log-prob correction is more exact but longer).

# REINFORCE for continuous control (Gaussian policy)

import gym, torch, torch.nn as nn, torch.optim as optim

import numpy as np

env = gym.make("Pendulum-v1") # continuous action in [-2, 2]

obs_dim = env.observation_space.shape[0]

act_dim = env.action_space.shape[0]

act_low = torch.tensor(env.action_space.low, dtype=torch.float32)

act_high = torch.tensor(env.action_space.high, dtype=torch.float32)

class GaussianPolicy(nn.Module):

def __init__(self):

super().__init__()

self.body = nn.Sequential(

nn.Linear(obs_dim, 128), nn.Tanh(),

nn.Linear(128, 128), nn.Tanh()

)

self.mu = nn.Linear(128, act_dim)

self.log_std = nn.Parameter(torch.zeros(act_dim)) # global log-std

def forward(self, s):

h = self.body(s)

mu = self.mu(h)

std = torch.exp(self.log_std)

return mu, std

pi = GaussianPolicy()

opt = optim.Adam(pi.parameters(), lr=3e-4)

gamma, entropy_coef = 0.99, 1e-3

for ep in range(800):

s, _ = env.reset()

logps, rews, ents = [], [], []

done = False

while not done:

s_t = torch.tensor(s, dtype=torch.float32)

mu, std = pi(s_t)

dist = torch.distributions.Normal(mu, std)

a = dist.sample() # sample from Gaussian policy

a_env = torch.clamp(a, act_low, act_high) # simple bounding

ns, r, done, _, _ = env.step(a_env.detach().numpy())

logps.append(dist.log_prob(a).sum())

ents.append(dist.entropy().sum())

rews.append(float(r))

s = ns

# discounted returns + normalize (baseline)

G, R = 0.0, []

for r in reversed(rews):

G = r + gamma * G

R.insert(0, G)

R = torch.tensor(R, dtype=torch.float32)

R = (R - R.mean()) / (R.std() + 1e-8)

loss = -(torch.stack(logps) * R).sum() - entropy_coef * torch.stack(ents).sum()

opt.zero_grad(); loss.backward(); opt.step()

if ep % 50 == 0:

print(f"[Cont] Episode {ep} \mid return {sum(rews):.1f}")

Note: For production-grade continuous PG, prefer actor-critic (with learned (V_\psi)), GAE, and proper tanh-squash log-prob correction. SAC adds max-entropy objectives to further stabilize/encourage exploration. (Proceedings of Machine Learning Research)

Debugging & Evaluation (What to Watch)

Learning curves: plot average episodic return (sliding window 50–100 eps).

Entropy: should decline gradually (too fast ⇒ stuck; too slow ⇒ wandering).

Variance: if unstable, increase batch size (more episodes per update), reduce LR, or improve baseline (learn (V_\psi)).

Sanity checks: random policy baseline; seed reproducibility; verify action scaling for continuous envs.

Where This Leads (Modern PG)

A2C/A3C: lower variance with learned critic; parallelism for speed.

TRPO/PPO: constrain updates (trust regions/clipping) to avoid destructive steps.

SAC: off-policy + maximum entropy with strong stability in continuous control. (spinningup.openai.com)

Actor–Critic Methods (A3C, 2016)

The Actor Critic framework represents one of the most elegant and transformative ideas in reinforcement learning the unification of two paradigms that had long evolved separately: policy-based methods and value-based methods. Before its introduction, algorithms either focused on learning a value function (like Q-learning and DQN) or directly optimizing a policy (like REINFORCE and vanilla policy gradients). Each came with advantages and limitations. Value-based methods were data-efficient but unstable in continuous action spaces, while policy-based methods handled continuous domains naturally but suffered from high variance and slow convergence.

The introduction of the Actor–Critic model in the early 2000s provided a hybrid that could learn efficiently in both settings, and it was later refined into one of the most practical, high-performance reinforcement learning architectures: the Asynchronous Advantage Actor–Critic (A3C) algorithm, developed by Volodymyr Mnih and colleagues at DeepMind in 2016.

A3C quickly became one of the most influential algorithms in modern RL history — a design so effective that nearly every advanced RL method that followed, including A2C, PPO, and SAC, builds on its principles.

The Intuition Behind Actor–Critic

At its heart, the Actor–Critic method introduces two learning systems that work in tandem. The actor is responsible for deciding what to do — it learns a policy $\pi_\theta(a\mid s)$ that defines a probability distribution over actions given a state. The critic, on the other hand, evaluates how good that decision was — it estimates the value function $V_w(s)$, which represents the expected future reward from that state.

This design addresses one of the biggest weaknesses of pure policy gradient methods like REINFORCE: the instability caused by high variance in return estimates. REINFORCE updates its policy only after an episode ends, basing the gradient entirely on the cumulative return, which can fluctuate wildly between runs. By incorporating a critic that estimates the value of each state, Actor–Critic methods can adjust the policy using a much more stable signal: the advantage function, which measures how much better an action performed compared to the critic’s expectation.

Mathematically, this update can be expressed as:

\[\nabla_\theta J(\theta) \;=\; \mathbb{E}_{\pi_\theta}\!\Big[\, \nabla_\theta \log \pi_\theta(a_t\mid s_t)\, \big(R_t - V_w(s_t)\big) \,\Big]\,,\]where $R_t$ is the return and $V_w(s_t)$ is the critic’s value estimate. The term $\big(R_t - V_w(s_t)\big)$ is known as the advantage — a measure of relative performance that stabilizes updates and reduces noise in the learning process.

This two-network dynamic is powerful: the actor pushes the policy toward actions that improve expected return, while the critic grounds those updates in context, providing smoother and more reliable gradient feedback.

The Innovation of A3C

Although the Actor Critic structure had existed before A3C, DeepMind’s version revolutionized the architecture through asynchronous parallelization.

In earlier methods like DQN or Advantage Actor Critic (A2C), the agent interacted with the environment sequentially. This sequential interaction introduced correlations between states and actions leading to instability and inefficient exploration. A3C broke this bottleneck by launching multiple independent agents (workers) in parallel, each running its own copy of the environment.

Each worker maintains a local copy of the model that interacts with the environment, collects trajectories, and periodically sends gradient updates to a shared global network. This global model aggregates updates asynchronously from all workers.

The result was extraordinary. Instead of needing powerful GPUs, A3C could achieve state-of-the-art performance on dozens of Atari games using only CPUs. The asynchronous design not only accelerated learning (by making better use of computational resources) but also introduced diversity into experience collection. Each worker explores different parts of the environment, reducing correlation in training data and enhancing the robustness of the learned policy.

Each worker interacts with its own environment instance and periodically updates a global shared network asynchronously. The global model accumulates gradients from multiple environments, leading to faster convergence and more stable updates.

The Mathematics of A3C

The A3C model builds upon the actor–critic structure with an important enhancement — the Advantage function $A(s_t, a_t) = R_t + \gamma V(s_{t+1}) - V(s_t)$. This term allows the network to assess how much better an action was compared to the expected value at that state. The total loss in A3C combines three components:

Actor Loss

Encourages actions that improve the policy:

\[L_{\text{actor}} = -\log \pi_\theta(a_t\mid s_t)\, A(s_t, a_t)\]Critic Loss

Minimizes the error in the value function:

\[L_{\text{critic}} = (R_t - V(s_t))^2\]Entropy Loss

Maintains policy diversity and avoids early convergence:

\[L_{\text{entropy}} = -\beta\, H(\pi_\theta)\]Total Loss

These components are combined into a total loss:

\[L = L_{\text{actor}} + c_v L_{\text{critic}} - c_H L_{\text{entropy}}\]The entropy term $H(\pi_\theta)$ acts as a regularizer that encourages the policy to remain uncertain when appropriate, promoting exploration and preventing the network from converging prematurely to suboptimal deterministic policies.

Implementation Walkthrough

Below is a simplified single-threaded implementation of A3C in PyTorch. While true A3C operates asynchronously with multiple workers running in parallel, this version captures the essence of the method in a self-contained example that can run on a single machine.

import torch

import torch.nn as nn

import torch.optim as optim

import gym

class ActorCritic(nn.Module):

def __init__(self, state_dim, action_dim):

super().__init__()

self.shared = nn.Sequential(nn.Linear(state_dim, 128), nn.ReLU())

self.actor = nn.Linear(128, action_dim)

self.critic = nn.Linear(128, 1)

def forward(self, state):

x = self.shared(state)

probs = torch.softmax(self.actor(x), dim=-1)

value = self.critic(x)

return probs, value

env = gym.make("CartPole-v1")

model = ActorCritic(4, 2)

optimizer = optim.Adam(model.parameters(), lr=0.001)

gamma, value_coef, entropy_coef = 0.99, 0.5, 0.01

for episode in range(1000):

state, _ = env.reset()

log_probs, values, rewards, entropies = [], [], [], []

done = False

while not done:

state_tensor = torch.tensor(state, dtype=torch.float32)

probs, value = model(state_tensor)

dist = torch.distributions.Categorical(probs)

action = dist.sample()

next_state, reward, done, _, _ = env.step(action.item())

log_probs.append(dist.log_prob(action))

values.append(value)

rewards.append(reward)

entropies.append(dist.entropy())

state = next_state

returns, R = [], 0

for r in reversed(rewards):

R = r + gamma * R

returns.insert(0, R)

returns = torch.tensor(returns, dtype=torch.float32)

values = torch.cat(values)

advantages = returns - values.squeeze()

actor_loss = -(torch.stack(log_probs) * advantages.detach()).sum()

critic_loss = advantages.pow(2).sum()

entropy_loss = -torch.stack(entropies).sum()

loss = actor_loss + value_coef * critic_loss + entropy_coef * entropy_loss

optimizer.zero_grad()

loss.backward()

optimizer.step()

if episode % 50 == 0:

print(f"Episode {episode}: Loss = {loss.item():.3f}, Return = {sum(rewards)}")

This simple version demonstrates the dual-network architecture in action. The shared layers extract state features, which then branch into the actor (policy head) and the critic (value head). The actor samples an action, the critic evaluates it, and the advantage function determines how much the actor should adjust its behavior. Over time, the network learns both to predict values accurately and to favor beneficial actions.

The asynchronous version expands this logic across multiple worker processes, each maintaining local gradients that are periodically merged into a global model. This design enables A3C to fully exploit the computational power of multicore CPUs, resulting in up to 10× faster learning and more stable convergence compared to DQN and vanilla policy gradients.

Why A3C Was a Milestone

The impact of A3C cannot be overstated. It introduced a practical blueprint for scaling reinforcement learning across multiple environments, breaking the reliance on GPUs and replay buffers. It showed that robust, human-level performance could be achieved using simple architectures powered by parallel computation.

Its asynchronous updates acted as a form of implicit regularization, reducing the overfitting and instability often observed in on-policy methods. Moreover, the idea of computing the advantage and the use of entropy bonuses became standard across nearly all subsequent algorithms. In short, A3C was the bridge between early deep RL methods and the modern policy optimization era.

Beyond A3C: The Road to PPO and SAC

Following A3C, researchers introduced A2C (Advantage Actor–Critic) a synchronous version that updated all workers simultaneously, making it easier to parallelize on GPUs. Later, Proximal Policy Optimization (PPO) refined this further by introducing clipped objectives to prevent large destructive updates, leading to even more stable performance.

Meanwhile, Soft Actor Critic (SAC) extended the Actor Critic design to continuous domains using off-policy learning and maximum entropy objectives for better exploration. These later methods all trace their conceptual lineage back to the Actor–Critic framework and the asynchronous brilliance of A3C.

GitHub and Reference Material

For practical implementations, you can explore the official PyTorch examples, which include a fully functional Actor–Critic and A3C setup for environments like CartPole and Pong. DeepMind’s original TensorFlow code and OpenAI’s Baselines library also provide detailed implementations and performance benchmarks.

GitHub Links:

PyTorch: Actor–Critic Implementation

DeepMind: A3C and ACER Repository https://github.com/Kaixhin/ACER

OpenAI Baselines: A2C/A3C Implementation https://github.com/openai/baselines/tree/master/baselines/a2c

Proximal Policy Optimization (PPO, 2017)

The Need for Stable Policy Optimization

By 2016, policy-gradient methods had matured through A3C’s introduction of asynchronous updates and baseline critics, yet they remained fragile. A single large policy update could cause catastrophic performance collapse, especially when training complex neural networks in continuous environments. The core problem was that vanilla policy gradients and even trust-region methods like TRPO (Trust Region Policy Optimization, 2015) required second-order approximations and were computationally heavy.

Proximal Policy Optimization (PPO), proposed by John Schulman et al. (2017) at OpenAI, offered a simple yet powerful alternative. It preserved the reliability of TRPO while remaining computationally efficient and easy to implement. PPO became the default policy-optimization method for OpenAI’s research and was later used in a wide range of their flagship projects from OpenAI Five (Dota 2) to Dactyl (robotic hand manipulation) to the fine-tuning processes that guided GPT and InstructGPT training.

The Core Idea

The goal of Proximal Policy Optimization (PPO) is to make policy updates trustworthy and consistent. Instead of taking large, unstable gradient steps, PPO restricts how much the new policy can deviate from the old one.

Let $\pi_\theta(a \mid s)$ be the current policy and $\pi_{\text{old}}(a \mid s)$ the previous policy. We define the probability ratio:

\[r_t(\theta) = \frac{\pi_\theta(a_t\mid s_t)}{\pi_{\text{old}}(a_t\mid s_t)}\]This ratio measures how much the new policy has changed its probability of taking the same action in the same state. The objective is then to maximize the expected advantage, but with a clip to prevent too large of a policy shift:

\[L^{\text{CLIP}}(\theta) = \mathbb{E}_t\!\left[\min\left(r_t(\theta) A_t,\; \text{clip}\big(r_t(\theta),\, 1-\epsilon,\, 1+\epsilon\big) A_t\right)\right]\]Here $\epsilon$ is a small constant (typically 0.1–0.2) that bounds the step size. If the new policy attempts to change too much, the clipped term prevents further increase in the objective value, thereby keeping updates proximal to the old policy.

This seemingly minor change had massive impact: it combined the stability of TRPO with the simplicity of REINFORCE — all without requiring second-order derivatives or complex constraints.

Why It Worked

PPO brought a level of practical reliability that made deep reinforcement learning trainable on large-scale tasks. Its balance between exploration and trust-region control allowed researchers to train massive agents safely. It became the foundation of OpenAI’s entire RL stack from game agents to language models.

OpenAI Five (2018) used PPO to train neural policies controlling five Dota 2 heroes, scaling to thousands of simulated matches per day across hundreds of workers.

Dactyl (2018) employed PPO to train a robotic hand to manipulate a cube purely in simulation before transferring to a real-world robot.

InstructGPT and ChatGPT later used PPO in the RLHF stage (Reinforcement Learning from Human Feedback) to fine-tune language models based on human preferences and ranking data.

These projects cemented PPO as a cornerstone algorithm for safe, scalable, and high-performance policy learning. OpenAI described it as “our go-to algorithm for virtually all reinforcement learning problems.”

Mathematical Perspective

The PPO loss function balances three objectives: the clipped surrogate objective, the value function loss, and an entropy bonus for exploration.

\[L^{\text{PPO}}(\theta) = \mathbb{E}_t \Big[ L^{\text{CLIP}}(\theta) - c_1 (V_\theta(s_t) - R_t)^2 + c_2 \, H[\pi_\theta(\cdot\mid s_t)] \Big].\]Here, $c_1$ and $c_2$ are coefficients that balance value function accuracy and entropy regularization. This formulation shows how PPO jointly optimizes for return maximization while maintaining diversity and value accuracy — a three-way harmony between stability, exploration, and performance.

Implementation in Practice (Python)

Below is a concise PyTorch implementation illustrating the core logic of PPO. It operates on a discrete environment like CartPole and uses mini-batch optimization over trajectories collected by the current policy.

import torch, torch.nn as nn, torch.optim as optim

import gym

from torch.distributions import Categorical

class PPOAgent(nn.Module):

def __init__(self, state_dim, action_dim):

super().__init__()

self.fc = nn.Sequential(nn.Linear(state_dim, 128), nn.ReLU())

self.policy = nn.Linear(128, action_dim)

self.value = nn.Linear(128, 1)

def forward(self, x):

x = self.fc(x)

probs = torch.softmax(self.policy(x), dim=-1)

v = self.value(x)

return probs, v

env = gym.make("CartPole-v1")

agent = PPOAgent(4, 2)

opt = optim.Adam(agent.parameters(), lr=3e-4)

eps_clip, gamma, value_coeff, entropy_coeff = 0.2, 0.99, 0.5, 0.01

for update in range(500):

logps, vals, rews, acts, states = [], [], [], [], []

s, _ = env.reset()

done = False

while not done:

s_t = torch.tensor(s, dtype=torch.float32)

probs, v = agent(s_t)

dist = Categorical(probs)

a = dist.sample()

ns, r, done, _, _ = env.step(a.item())

logps.append(dist.log_prob(a))

vals.append(v)

rews.append(r)

acts.append(a)

states.append(s_t)

s = ns

# compute discounted returns

R, returns = 0, []

for r in reversed(rews):

R = r + gamma * R

returns.insert(0, R)

returns = torch.tensor(returns, dtype=torch.float32)

vals = torch.cat(vals).squeeze()

adv = returns - vals.detach()

adv = (adv - adv.mean()) / (adv.std() + 1e-8)

# old policy probabilities

old_logps = torch.stack(logps).detach()

for _ in range(4): # PPO update epochs

probs, vals_new = agent(torch.stack(states))

dist = Categorical(probs)

new_logps = dist.log_prob(torch.stack(acts))

ratio = (new_logps - old_logps).exp()

surr1 = ratio * adv

surr2 = torch.clamp(ratio, 1 - eps_clip, 1 + eps_clip) * adv

actor_loss = -torch.min(surr1, surr2).mean()

critic_loss = (returns - vals_new.squeeze()).pow(2).mean()

entropy = dist.entropy().mean()

loss = actor_loss + value_coeff * critic_loss - entropy_coeff * entropy

opt.zero_grad()

loss.backward()

opt.step()

if update % 50 == 0:

print(f"Update {update}: loss={loss.item():.3f}, return={sum(rews)}")

This implementation demonstrates the essence of PPO: the ratio ( r_t ), the clipping mechanism, the advantage function, and the dual optimization of policy and value heads. It shows why PPO is both stable and simple enough to serve as a default algorithm in modern RL frameworks.

PPO in OpenAI Research

PPO became the engine behind some of OpenAI’s most iconic achievements. In OpenAI Five, agents trained with PPO played millions of Dota 2 matches to reach professional skill levels. The algorithm enabled fine-grained control over learning rates and policy stability across hundreds of simulations running in parallel. In Dactyl, PPO allowed a robot hand to master cube rotation using simulated experience and domain randomization before transferring to the real world a milestone in sim-to-real transfer. Later, during the development of InstructGPT, OpenAI reused PPO in a different context: instead of game scores or physical rewards, the agent optimized for human feedback through a reward model trained on annotator preferences. This innovation laid the foundation for the alignment techniques that power ChatGPT today.

The common thread through all these projects was PPO’s reliability. It allowed OpenAI to scale up policy optimization without fear of collapse or instability something no previous algorithm offered with the same ease.

Why PPO Became the Default

The beauty of PPO lies in its simplicity. It can be implemented in less than 200 lines of Python, yet achieves competitive performance with algorithms that took years to develop. It requires no complex Lagrange multipliers, no second-order Hessians, and no matrix inversions. Despite its simplicity, it achieves consistent and robust results across a wide range of tasks from robotics to language model fine-tuning. For many researchers, PPO was the moment when reinforcement learning became reliable enough to be practical.

The Math & Intuition of Reinforcement Learning

Reinforcement Learning (RL) studies how an agent should act in an environment to maximize long-term reward. The mathematics gives a compact language for this story; the intuition makes the symbols feel inevitable. Keep the picture of a maze or tic-tac-toe in mind: each move nudges you toward or away from a win, and the math formalizes how those nudges accumulate.

Layer 1 MDPs: the world as state, action, reward

An RL problem is modeled as a Markov Decision Process: $\text{MDP}=(S,A,P,R,\gamma)$. Here $S$ is the set of states, $A$ the actions, $P(s’\mid s,a)$ the transition law, $R(s,a)$ the reward, and $\gamma \in [0,1]$ the discount that down-weights distant outcomes. A policy $\pi(a\mid s)$ maps a state to a distribution over actions.

The agent’s objective is to maximize expected return:

\[G_t = \sum_{k=0}^{\infty} \gamma^k r_{t+k+1}\]Formally, we seek a policy $\pi^*$ that maximizes:

\[J(\pi) = \mathbb{E}_{\pi}\left[\sum_{t \ge 0} \gamma^t r_{t+1}\right]\]under the environment dynamics.

How to solve it: specify $S, A, P, R, \gamma$ for your task; choose whether to compute exact values with a known model $P$ (dynamic programming) or learn from data when $P$ is unknown (temporal-difference and policy gradient methods). That fork — planning vs. learning — determines everything that follows.

Layer 2 Bellman equations: recursive value reasoning

For a fixed policy $\pi$, the state-value function satisfies

\[V^{\pi}(s) = \mathbb{E}_{a\sim\pi,\, s'\sim P}\!\left[r(s,a) + \gamma\, V^{\pi}(s')\right].\]The action-value function satisfies

\[Q^{\pi}(s,a) = \mathbb{E}_{s'\sim P}\!\left[r(s,a) + \gamma\, \mathbb{E}_{a'\sim\pi}Q^{\pi}(s',a')\right].\]For optimal control (Bellman optimality), remove $\pi$ by taking a max:

\[V^*(s) = \max_{a}\, \mathbb{E}_{s'\sim P}\!\left[r(s,a) + \gamma\, V^*(s')\right], \qquad Q^*(s,a) = \mathbb{E}_{s'\sim P}\!\left[r(s,a) + \gamma\, \max_{a'} Q^*(s',a')\right].\]These recursions express a simple intuition: the value of “here” equals reward now plus the discounted value of “where you’ll land.” In a maze, squares adjacent to the exit inherit high value; value then “flows backward” across the grid by these equations (Bellman, 1957).

How to solve it in practice: if the model $P$ is known, you can iterate to convergence. For policy evaluation, initialize $V_0$ arbitrarily and update

\[V_{k+1}(s)=\sum_{a}\pi(a\mid s)\sum_{s'} P(s'\mid s,a)\,\big[\,R(s,a)+\gamma\,V_k(s')\,\big].\]Improve the policy greedily via

\[\pi_{k+1}(s)=\arg\max_{a}\ \sum_{s'} P(s'\mid s,a)\,\big[\,R(s,a)+\gamma\,V_k(s')\,\big],\]and alternate these two steps (policy iteration). Or collapse them into value iteration:

\[V_{k+1}(s)=\max_{a}\ \sum_{s'} P(s'\mid s,a)\,\big[\,R(s,a)+\gamma\,V_k(s')\,\big]\]until changes fall below a tolerance; then act greedily with respect to $V$. With grids and tic-tac-toe, this converges quickly and matches intuition: the closer to winning, the higher the value.

How to solve it in practice: if the model $P$ is known, you can iterate to convergence. For policy evaluation, initialize $V_0$ arbitrarily and update

\[V_{k+1}(s)=\sum_{a}\pi(a\mid s)\sum_{s'} P(s'\mid s,a)\,\big[\,R(s,a)+\gamma\,V_k(s')\,\big].\]Improve the policy greedily via

\[\pi_{k+1}(s)=\arg\max_{a}\ \sum_{s'} P(s'\mid s,a)\,\big[\,R(s,a)+\gamma\,V_k(s')\,\big],\]and alternate these two steps (policy iteration). Or collapse them into value iteration:

\[V_{k+1}(s)=\max_{a}\ \sum_{s'} P(s'\mid s,a)\,\big[\,R(s,a)+\gamma\,V_k(s')\,\big]\]until changes fall below a tolerance; then act greedily with respect to $V$. With grids and tic-tac-toe, this converges quickly and matches intuition: the closer to winning, the higher the value.

Layer 3 Q-Learning: learning optimal action values from experience

When the dynamics $P$ are unknown, Watkins’ Q-Learning learns $Q^*(s,a)$ directly from trajectories. The canonical update is

\[Q(s,a) \leftarrow Q(s,a) + \alpha\Big[\, r + \gamma \max_{a'} Q(s',a') - Q(s,a) \,\Big],\]with learning rate $\alpha \in (0,1]$. The agent typically uses $\epsilon$-greedy exploration: with probability $1-\epsilon$ it selects $\arg\max_a Q(s,a)$, and with probability $\epsilon$ it explores a random action. Over repeated visits, actions that ultimately lead to high returns accumulate larger $Q$-values; poor actions decay.

How to solve it step by step: initialize $Q(s,a)$ (often to zero). For each episode, start in some $s$; at each step pick an action via $\epsilon$-greedy, observe reward $r$ and next state $s’$, apply the update above, then move to $s’$. Anneal $\epsilon$ over time and stop when $Q$ stabilizes or your target performance is reached.

With function approximation (e.g., a neural network $\hat Q_\theta$), minimize the squared TD error

\[\mathcal{L}_{\text{DQN}} = \Big( r + \gamma \max_{a'} \hat Q_{\theta^-}(s',a') - \hat Q_\theta(s,a) \Big)^2,\]using gradient descent; DQN stabilizes training with experience replay and a target network (parameters $\theta^-$) updated periodically.

by gradient descent; DeepMind’s DQN adds experience replay and target networks for stability (Mnih et al., 2015).

Layer 4 — Policy gradients: optimizing behavior directly

Policy gradient methods skip value maximization and adjust a parameterized policy πθ(a∣s)πθ(a∣s) to maximize expected return. Write the objective as

\[J(\theta)=\mathbb{E}_{\tau\sim\pi_\theta}\!\left[\sum_{t\ge 0}\gamma^t r_{t+1}\right]\]Using the likelihood-ratio trick (REINFORCE), the gradient becomes

\[\nabla_\theta J(\theta) = \mathbb{E}_{\tau\sim\pi_\theta}\!\left[\sum_{t} \nabla_\theta \log \pi_\theta(a_t \mid s_t)\, G_t\right]\]where GtGt is a return estimate from time tt. Intuitively, actions that led to high returns are made more likely, proportional to how sensitive the policy was to choosing them (Williams, 1992).

How to solve it step by step: sample trajectories by running $\pi_\theta$ in the environment; compute returns $G_t$ for each step (Monte-Carlo or bootstrapped estimates); form the stochastic gradient and apply the parameter update.

\[\widehat{\nabla_\theta J(\theta)}=\sum_{t}\nabla_\theta \log \pi_\theta(a_t \mid s_t)\, G_t\] \[\theta \leftarrow \theta + \eta\, \widehat{\nabla_\theta J(\theta)}\]This naïve estimator is high-variance, so two refinements are essential in practice.

First, subtract a baseline $b(s_t)$ without biasing the gradient:

\[\nabla_\theta J(\theta) = \mathbb{E}\!\left[\sum_t \nabla_\theta \log \pi_\theta(a_t \mid s_t)\,\big(G_t - b(s_t)\big)\right].\]Choosing $b(s)=V^\pi(s)$ yields the advantage $A^\pi(s,a)=Q^\pi(s,a)-V^\pi(s)$, focusing the update on how much better an action was than typical for that state (A2C/A3C; Mnih et al., 2016).

\[A^\pi(s,a)=Q^\pi(s,a)-V^\pi(s).\]A practical estimator uses the TD-error

\[\delta_t = r_{t+1} + \gamma\, V_\phi(s_{t+1}) - V_\phi(s_t),\]and its exponentially-weighted sums. Schulman et al. (2016) popularized Generalized Advantage Estimation (GAE):

\[\hat A_t=\sum_{\ell=0}^{\infty}(\gamma\lambda)^{\ell}\,\delta_{t+\ell},\qquad \lambda\in[0,1].\]Second, encourage exploration by adding entropy regularization. Augment the objective with

\[J_{\text{ent}}(\theta)=\beta\,\mathbb{E}_s\!\left[\,H\!\big(\pi_\theta(\cdot\mid s)\big)\right],\]where $H(\cdot)$ is the categorical entropy and $\beta>0$ controls strength. The resulting actor loss adds the entropy term, resisting premature collapse to deterministic policies and often improving robustness.

How to solve it end-to-end (actor–critic loop)

Initialize policy parameters $\theta$ and value-function parameters $\phi$. Roll out the policy to collect batches of $(s_t,a_t,r_{t+1},s_{t+1})$. Compute value targets $\hat V_{\text{target},t}$ and advantages $\hat A_t$ (via TD-errors or GAE). Update the critic and actor as follows, then repeat until returns plateau.

\[\mathcal{L}_{\text{critic}}=\sum_t\Big(\hat V_{\text{target},t}-V_\phi(s_t)\Big)^2\] \[\mathcal{L}_{\text{actor}}=-\sum_t \log \pi_\theta(a_t\mid s_t)\,\hat A_t\;-\;\beta\, H\!\big(\pi_\theta(\cdot\mid s_t)\big)\] \[\theta \leftarrow \theta - \eta\,\nabla_\theta \mathcal{L}_{\text{actor}},\qquad \phi \leftarrow \phi - \eta_V\, \nabla_\phi \mathcal{L}_{\text{critic}}.\]PPO keeps the same advantage-based objective but constrains each policy update to stay near the old policy by clipping the probability ratio, which stabilizes training (Schulman et al., 2017).

A final intuition check

If the maze exit lights up with a reward, Bellman equations let value “shine backward” through adjacent cells until the whole map encodes how promising each square is. Q-Learning learns this map by touching the walls and remembering what hurt or helped. Policy gradients skip the map and instead tune the agent’s instincts directly, rewarding it for sequences that ended well, dampening instincts that didn’t, and keeping a dash of randomness so it doesn’t stop searching too soon.

The Code

This section gives you a compact, runnable path from a tabular baseline to a neural one. We start with Q-Learning on FrozenLake to make the update rule concrete, then scale the same idea to function approximation with a tiny PyTorch DQN on CartPole.

Read the short motivation, skim the pseudocode to lock in the algorithmic loop, and run the Python to verify you can reproduce learning on your machine.

Q-Learning learns action values in a table by bootstrapping from its own estimates. At each step you act ε-greedily from the current state, observe the next state and reward, and push the old entry toward the target r+γmaxa′Q(s′,a′)r+γmaxa′Q(s′,a′). Exploration starts high and decays so the agent first discovers outcomes and then exploits what it has learned. On FrozenLake, using a deterministic variant keeps the learning curve focused on the update itself rather than stochastic slips.

Q-Learning (pseudocode)

for episode in range(E):

s = env.reset()

done = False

while not done:

a = epsilon_greedy(Q[s])

s2, r, done, _ = env.step(a)

Q[s, a] = Q[s, a] + alpha * (r + gamma * (0 if done else max(Q[s2])) - Q[s, a])

s = s2

Tabular Q-Learning — FrozenLake

import gymnasium as gym

import numpy as np

env = gym.make("FrozenLake-v1", is_slippery=False) # deterministic for clarity

nS, nA = env.observation_space.n, env.action_space.n

Q = np.zeros((nS, nA))

alpha, gamma = 0.8, 0.95

epsilon, eps_min, eps_decay = 1.0, 0.05, 0.995

episodes, max_steps = 800, 100

def epsilon_greedy(s):

return env.action_space.sample() if np.random.rand() < epsilon else np.argmax(Q[s])

for _ in range(episodes):

s, _ = env.reset()

for _ in range(max_steps):

a = epsilon_greedy(s)

s2, r, term, trunc, _ = env.step(a)

done = term or trunc

best_next = 0.0 if done else np.max(Q[s2])

Q[s, a] += alpha * (r + gamma * best_next - Q[s, a])

s = s2

if done: break

print("Sample Q[0]:", Q[0])

Deep Q-Networks replace the table with a neural network Qθ(s,⋅)Qθ(s,⋅), train it on random mini-batches from a replay buffer to break temporal correlations, and stabilize targets with a lagged copy Qθ−Qθ−. A small MLP is enough for CartPole.

Using the Double DQN trick selecting the next action with the policy network but evaluating it with the target network reduces overestimation bias and improves stability without extra complexity. Keep ε-greedy exploration early, start learning only after the buffer has a few hundred to a thousand transitions, and periodically sync the target network.

DQN (pseudocode)

initialize policy network Qθ and target network Qθ− (copy of θ)

initialize replay buffer B

for each episode:

s = env.reset()

done = False

while not done:

with prob ε: a = random action

else: a = argmax_a Qθ(s,a)

s2, r, done = env.step(a); store (s,a,r,s2,done) in B

if len(B) ≥ batch_size:

sample (S,A,R,S2,D) ~ B

y = R + γ * (1 − D) * Qθ−(S2, argmax_a Qθ(S2,a)) # Double DQN target

loss = Huber(Qθ(S,A), y); backprop on θ

every K steps: θ− ← θ

s ← s2

DQN — CartPole (PyTorch) with Replay Buffer + Target Network

import gymnasium as gym, numpy as np, torch, random, collections

import torch.nn as nn, torch.optim as optim

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

env = gym.make("CartPole-v1")

class ReplayBuffer:

def __init__(self, cap=50_000): self.buf = collections.deque(maxlen=cap)

def push(self, s,a,r,s2,d): self.buf.append((s,a,r,s2,d))

def sample(self, bs=64):

batch = random.sample(self.buf, bs)

s,a,r,s2,d = map(np.array, zip(*batch))

to = lambda x,dt: torch.tensor(x, dtype=dt, device=device)

return (to(s,torch.float32), to(a,torch.int64).unsqueeze(1),

to(r,torch.float32).unsqueeze(1), to(s2,torch.float32),

to(d,torch.float32).unsqueeze(1))

def __len__(self): return len(self.buf)

class QNet(nn.Module):

def __init__(self, obs_dim, n_act):

super().__init__()

self.net = nn.Sequential(

nn.Linear(obs_dim,128), nn.ReLU(),

nn.Linear(128,128), nn.ReLU(),

nn.Linear(128,n_act)

)

def forward(self,x): return self.net(x)

obs_dim, n_act = env.observation_space.shape[0], env.action_space.n

policy, target = QNet(obs_dim,n_act).to(device), QNet(obs_dim,n_act).to(device)

target.load_state_dict(policy.state_dict()); target.eval()

opt = optim.Adam(policy.parameters(), lr=1e-3)

gamma = 0.99

buf = ReplayBuffer()

batch_size, start_learn, sync_every = 64, 1000, 1000

eps, eps_min, eps_decay = 1.0, 0.05, 0.995

global_step = 0

def act(s, eps):

if random.random() < eps: return env.action_space.sample()

with torch.no_grad():

q = policy(torch.tensor(s, dtype=torch.float32, device=device).unsqueeze(0))

return int(q.argmax(1))

for ep in range(300):

s, _ = env.reset(seed=random.randint(0, 10_000))

done = False

while not done:

a = act(s, eps)

s2, r, term, trunc, _ = env.step(a)

done = term or trunc

buf.push(s,a,r,s2,done)

s = s2

global_step += 1

if len(buf) >= start_learn:

S,A,R,S2,D = buf.sample(batch_size)

with torch.no_grad():

next_a = policy(S2).argmax(1, keepdim=True)

target_q = R + gamma * (1 - D) * target(S2).gather(1, next_a)

q = policy(S).gather(1, A)

loss = nn.functional.smooth_l1_loss(q, target_q)

opt.zero_grad(); loss.backward()

nn.utils.clip_grad_norm_(policy.parameters(), 10.0)

opt.step()

if global_step % sync_every == 0:

target.load_state_dict(policy.state_dict())

eps = max(eps_min, eps * eps_decay)

print("Training complete.")

If you prefer studying from existing, public code, the classic reference implementations and a Colab-backed tutorial are freely available.

Denny Britz’s Reinforcement Learning GitHub repository includes tabular algorithms like Q-Learning in clean NumPy form at github.com/dennybritz/reinforcement-learning, and the official PyTorch tutorial “Reinforcement Q-Learning” provides a step-by-step DQN notebook at pytorch.org/tutorials/…/reinforcement_q_learning that you can open directly in Colab.

RL Playgrounds

Reinforcement Learning playgrounds are sandboxes for rapid, reproducible experimentation where you prototype algorithms, visualize behavior, tune rewards, and debug safely. OpenAI Gym/Gymnasium offers standardized single-agent benchmarks with a consistent API, from CartPole (balance), to LunarLander (control), to MountainCar (sparse rewards), letting you swap environments with minimal code changes. For multi-agent settings, PettingZoo extends the idea to competitive and cooperative tasks with clear interfaces for parallel or turn-based interactions. Together, these suites enable apples-to-apples comparisons, curriculum design from simple to complex, and fast iteration cycles that turn ideas into working policies.

We’ll create a tiny playground runner that executes short rollouts in three classic Gymnasium tasks CartPole, MountainCar, and (optionally) LunarLander then saves a compact results.json file summarizing average and best returns. Next, we’ll use a clean, interactive HTML dashboard to visualize those results with a table, metric chips, and per-environment sparklines. This lets you prototype quickly, compare policies, and sanity-check learning without wiring a full training loop. CartPole should show fast improvement even with simple policies (dense rewards). MountainCar highlights sparse-reward momentum building (a tiny heuristic beats random). LunarLander adds noise/variance and requires Box2D; enable it to stress-test stability.

Python run rollouts

Save this as run_playgrounds.py and run it with python run_playgrounds.py –episodes 10. It uses random actions by default, with a small velocity-sign heuristic for MountainCar when –policy auto (default). LunarLander is commented out to keep dependencies light; see the note below to enable it.

# run_playgrounds.py

# Minimal Gymnasium playground runner for CartPole, MountainCar (+ optional LunarLander).

# Usage:

# pip install gymnasium

# # optional for LunarLander: pip install "gymnasium[box2d]" box2d-py

# python run_playgrounds.py --episodes 10

import json, random, argparse

from dataclasses import dataclass, asdict

from typing import List

import numpy as np

import gymnasium as gym

@dataclass

class EpisodeResult:

episode: int

return_: float

length: int

@dataclass

class EnvSummary:

env_id: str

policy: str

episodes: int

avg_return: float

best_return: float

returns: List[EpisodeResult]

def run_env(env_id: str, episodes: int = 5, max_steps: int = 1000, policy: str = "auto") -> EnvSummary:

env = gym.make(env_id)

results: List[EpisodeResult] = []

def act_random(_obs): return env.action_space.sample()

# MountainCar heuristic: push toward velocity sign to build momentum

def act_mountaincar(obs):

position, velocity = obs

return 2 if velocity > 0 else 0 # 0=left, 2=right

if policy == "auto" and "MountainCar" in env_id:

choose_action, used_policy = act_mountaincar, "heuristic(velocity-sign)"

else:

choose_action, used_policy = act_random, "random"

for ep in range(1, episodes+1):

obs, _ = env.reset(seed=random.randint(0, 10_000))

total_r = 0.0

for t in range(max_steps):

a = choose_action(obs)

obs, r, terminated, truncated, _ = env.step(a)

total_r += float(r)

if terminated or truncated:

results.append(EpisodeResult(ep, total_r, t+1))

break

else:

results.append(EpisodeResult(ep, total_r, max_steps))

env.close()

avg_r = float(np.mean([r.return_ for r in results]))

best_r = float(np.max([r.return_ for r in results]))

return EnvSummary(env_id, used_policy, episodes, avg_r, best_r, results)

def main():

ap = argparse.ArgumentParser()

ap.add_argument("--episodes", type=int, default=5, help="episodes per environment")

ap.add_argument("--max-steps", type=int, default=1000, help="max steps per episode")

ap.add_argument("--policy", type=str, default="auto", choices=["random","auto"], help="action policy")

ap.add_argument("--out", type=str, default="results.json", help="output JSON path")

args = ap.parse_args()

# Add "LunarLander-v2" here IF Box2D is installed:

# pip install "gymnasium[box2d]" box2d-py

envs = ["CartPole-v1", "MountainCar-v0"] # + ["LunarLander-v2"]

payload = {"generated_at": "", "episodes_per_env": args.episodes, "policy": args.policy, "envs":[]}